Kubernetes - Upgrade

Alasta 23 Août 2024 kubernetes kubernetes node upgrade

Description : Kubernetes, upgrade d'un cluster

Upgrade d’un cluster Kubernetes

Software versions

Avant d’upgrader un cluster Kubernetes, il faut voir la voir et comprendre les versions.

Connaître la version de son cluster:

k get nodes

NAME STATUS ROLES AGE VERSION

controlplane Ready control-plane 4d1h v1.30.0

node01 Ready <none> 4d1h v1.30.0La version de Kubernetes est dans la colonne VERSION.

Kubernetes passe par une phase alpha et beta avant de passer en stable.

Chaque composant core ne peut pas être supérieure à kube-apiserver.

Doc officielle sur la compatinbilité entre les versions et composants.

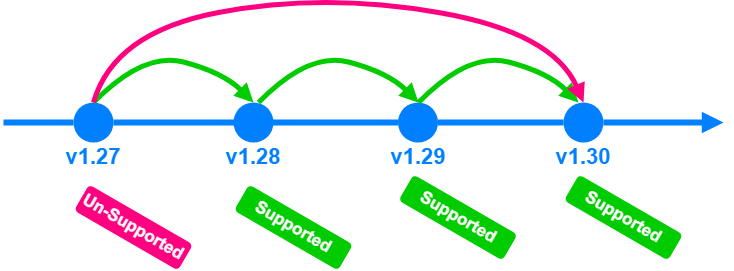

Kubernetes supporte seulement 3 versions mineurs:

L’upgrade doit se faire version par version.

L’upgrade doit se faire version par version.

Upgrade

Il existe “3 méthodes” pour upgrader un cluster Kubernetes:

- Interface du service Cloud qui le fait automatiquement

- Utilisation de kubeadm

- Upgrade manuel de chaque composant

Il faut upgrader le control-plane puis les worker nodes.

Lors de la phase d’upgrade du control-plane, l’existant des pods fonctionnent sur les nodes, mais il n’y a pas de nouveaux pods ni actions d’admin (ex kubectl).

Il existe plusieurs stratégies pour upgrader un node:

- All at once: plus d’accès aux applications

- One node at the time

- New node to the cluster (en version supérieure), déplacement de workload sur le nouveau node, suppression de l’ancien et ça pour chque node.

Version avant upgrade

k get node

NAME STATUS ROLES AGE VERSION

controlplane Ready control-plane 48m v1.29.0

node01 Ready <none> 47m v1.29.0Upgrade max avec le kubelet actuel

kubeadm upgrade plan

[upgrade/config] Making sure the configuration is correct:

[upgrade/config] Reading configuration from the cluster...

[upgrade/config] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[preflight] Running pre-flight checks.

[upgrade] Running cluster health checks

[upgrade] Fetching available versions to upgrade to

[upgrade/versions] Cluster version: v1.29.0

[upgrade/versions] kubeadm version: v1.29.0

I0824 18:38:02.752302 23620 version.go:256] remote version is much newer: v1.31.0; falling back to: stable-1.29

[upgrade/versions] Target version: v1.29.8

[upgrade/versions] Latest version in the v1.29 series: v1.29.8

Components that must be upgraded manually after you have upgraded the control plane with 'kubeadm upgrade apply':

COMPONENT CURRENT TARGET

kubelet 2 x v1.29.0 v1.29.8

Upgrade to the latest version in the v1.29 series:

COMPONENT CURRENT TARGET

kube-apiserver v1.29.0 v1.29.8

kube-controller-manager v1.29.0 v1.29.8

kube-scheduler v1.29.0 v1.29.8

kube-proxy v1.29.0 v1.29.8

CoreDNS v1.10.1 v1.11.1

etcd 3.5.10-0 3.5.10-0

You can now apply the upgrade by executing the following command:

kubeadm upgrade apply v1.29.8

Note: Before you can perform this upgrade, you have to update kubeadm to v1.29.8.

_____________________________________________________________________

The table below shows the current state of component configs as understood by this version of kubeadm.

Configs that have a "yes" mark in the "MANUAL UPGRADE REQUIRED" column require manual config upgrade or

resetting to kubeadm defaults before a successful upgrade can be performed. The version to manually

upgrade to is denoted in the "PREFERRED VERSION" column.

API GROUP CURRENT VERSION PREFERRED VERSION MANUAL UPGRADE REQUIRED

kubeproxy.config.k8s.io v1alpha1 v1alpha1 no

kubelet.config.k8s.io v1beta1 v1beta1 no

_____________________________________________________________________Début d’upgrade - drain du controle-plane

k drain controlplane --ignore-daemonsets

node/controlplane already cordoned

Warning: ignoring DaemonSet-managed Pods: kube-flannel/kube-flannel-ds-b8vx6, kube-system/kube-proxy-mzkv8

evicting pod kube-system/coredns-69f9c977-x8cvb

evicting pod default/blue-667bf6b9f9-jhmqs

evicting pod kube-system/coredns-69f9c977-4vft7

evicting pod default/blue-667bf6b9f9-4kjzv

pod/blue-667bf6b9f9-jhmqs evicted

pod/blue-667bf6b9f9-4kjzv evicted

pod/coredns-69f9c977-4vft7 evicted

pod/coredns-69f9c977-x8cvb evicted

node/controlplane drainedVérification du drain du control-plane

k get node

NAME STATUS ROLES AGE VERSION

controlplane Ready,SchedulingDisabled control-plane 52m v1.29.0

node01 Ready <none> 51m v1.29.0Depuis le control-plane

Ajout du repository kubernetes en version cible (N+1)

Ajouter le repo Kubernetes v1.30:

vim /etc/apt/sources.list.d/kubernetes.list

deb [signed-by=/etc/apt/keyrings/kubernetes-apt-keyring.gpg] https://pkgs.k8s.io/core:/stable:/v1.30/deb/ /Update les packages:

apt update

apt-cache madison kubeadm

apt-get install kubeadm=1.30.0-1.1Plan de l’upgrade du control-plane:

kubeadm upgrade plan v1.30.0

[upgrade/config] Making sure the configuration is correct:

[preflight] Running pre-flight checks.

[upgrade/config] Reading configuration from the cluster...

[upgrade/config] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[upgrade] Running cluster health checks

[upgrade] Fetching available versions to upgrade to

[upgrade/versions] Cluster version: 1.29.0

[upgrade/versions] kubeadm version: v1.30.0

[upgrade/versions] Target version: v1.30.0

[upgrade/versions] Latest version in the v1.29 series: v1.30.0

Components that must be upgraded manually after you have upgraded the control plane with 'kubeadm upgrade apply':

COMPONENT NODE CURRENT TARGET

kubelet controlplane v1.29.0 v1.30.0

kubelet node01 v1.29.0 v1.30.0

Upgrade to the latest version in the v1.29 series:

COMPONENT NODE CURRENT TARGET

kube-apiserver controlplane v1.29.0 v1.30.0

kube-controller-manager controlplane v1.29.0 v1.30.0

kube-scheduler controlplane v1.29.0 v1.30.0

kube-proxy 1.29.0 v1.30.0

CoreDNS v1.10.1 v1.11.1

etcd controlplane 3.5.10-0 3.5.12-0

You can now apply the upgrade by executing the following command:

kubeadm upgrade apply v1.30.0

_____________________________________________________________________

The table below shows the current state of component configs as understood by this version of kubeadm.

Configs that have a "yes" mark in the "MANUAL UPGRADE REQUIRED" column require manual config upgrade or

resetting to kubeadm defaults before a successful upgrade can be performed. The version to manually

upgrade to is denoted in the "PREFERRED VERSION" column.

API GROUP CURRENT VERSION PREFERRED VERSION MANUAL UPGRADE REQUIRED

kubeproxy.config.k8s.io v1alpha1 v1alpha1 no

kubelet.config.k8s.io v1beta1 v1beta1 no

_____________________________________________________________________Upgrade du control-plane

kubeadm upgrade apply v1.30.0

[upgrade/config] Making sure the configuration is correct:

[preflight] Running pre-flight checks.

[upgrade/config] Reading configuration from the cluster...

[upgrade/config] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[upgrade] Running cluster health checks

[upgrade/version] You have chosen to change the cluster version to "v1.30.0"

[upgrade/versions] Cluster version: v1.29.0

[upgrade/versions] kubeadm version: v1.30.0

[upgrade] Are you sure you want to proceed? [y/N]: y

[upgrade/prepull] Pulling images required for setting up a Kubernetes cluster

[upgrade/prepull] This might take a minute or two, depending on the speed of your internet connection

[upgrade/prepull] You can also perform this action in beforehand using 'kubeadm config images pull'

W0824 18:45:13.341306 27237 checks.go:844] detected that the sandbox image "registry.k8s.io/pause:3.8" of the container runtime is inconsistent with that used by kubeadm.It is recommended to use "registry.k8s.io/pause:3.9" as the CRI sandbox image.

[upgrade/apply] Upgrading your Static Pod-hosted control plane to version "v1.30.0" (timeout: 5m0s)...

[upgrade/etcd] Upgrading to TLS for etcd

[upgrade/staticpods] Preparing for "etcd" upgrade

[upgrade/staticpods] Renewing etcd-server certificate

[upgrade/staticpods] Renewing etcd-peer certificate

[upgrade/staticpods] Renewing etcd-healthcheck-client certificate

[upgrade/staticpods] Moved new manifest to "/etc/kubernetes/manifests/etcd.yaml" and backed up old manifest to "/etc/kubernetes/tmp/kubeadm-backup-manifests-2024-08-24-18-45-31/etcd.yaml"

[upgrade/staticpods] Waiting for the kubelet to restart the component

[upgrade/staticpods] This can take up to 5m0s

[apiclient] Found 1 Pods for label selector component=etcd

[upgrade/staticpods] Component "etcd" upgraded successfully!

[upgrade/etcd] Waiting for etcd to become available

[upgrade/staticpods] Writing new Static Pod manifests to "/etc/kubernetes/tmp/kubeadm-upgraded-manifests1006185316"

[upgrade/staticpods] Preparing for "kube-apiserver" upgrade

[upgrade/staticpods] Renewing apiserver certificate

[upgrade/staticpods] Renewing apiserver-kubelet-client certificate

[upgrade/staticpods] Renewing front-proxy-client certificate

[upgrade/staticpods] Renewing apiserver-etcd-client certificate

[upgrade/staticpods] Moved new manifest to "/etc/kubernetes/manifests/kube-apiserver.yaml" and backed up old manifest to "/etc/kubernetes/tmp/kubeadm-backup-manifests-2024-08-24-18-45-31/kube-apiserver.yaml"

[upgrade/staticpods] Waiting for the kubelet to restart the component

[upgrade/staticpods] This can take up to 5m0s

[apiclient] Found 1 Pods for label selector component=kube-apiserver

[upgrade/staticpods] Component "kube-apiserver" upgraded successfully!

[upgrade/staticpods] Preparing for "kube-controller-manager" upgrade

[upgrade/staticpods] Renewing controller-manager.conf certificate

[upgrade/staticpods] Moved new manifest to "/etc/kubernetes/manifests/kube-controller-manager.yaml" and backed up old manifest to "/etc/kubernetes/tmp/kubeadm-backup-manifests-2024-08-24-18-45-31/kube-controller-manager.yaml"

[upgrade/staticpods] Waiting for the kubelet to restart the component

[upgrade/staticpods] This can take up to 5m0s

[apiclient] Found 1 Pods for label selector component=kube-controller-manager

[upgrade/staticpods] Component "kube-controller-manager" upgraded successfully!

[upgrade/staticpods] Preparing for "kube-scheduler" upgrade

[upgrade/staticpods] Renewing scheduler.conf certificate

[upgrade/staticpods] Moved new manifest to "/etc/kubernetes/manifests/kube-scheduler.yaml" and backed up old manifest to "/etc/kubernetes/tmp/kubeadm-backup-manifests-2024-08-24-18-45-31/kube-scheduler.yaml"

[upgrade/staticpods] Waiting for the kubelet to restart the component

[upgrade/staticpods] This can take up to 5m0s

[apiclient] Found 1 Pods for label selector component=kube-scheduler

[upgrade/staticpods] Component "kube-scheduler" upgraded successfully!

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config" in namespace kube-system with the configuration for the kubelets in the cluster

[upgrade] Backing up kubelet config file to /etc/kubernetes/tmp/kubeadm-kubelet-config854545160/config.yaml

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] Configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] Configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

[upgrade/successful] SUCCESS! Your cluster was upgraded to "v1.30.0". Enjoy!

[upgrade/kubelet] Now that your control plane is upgraded, please proceed with upgrading your kubelets if you haven't already done so.Update de kubelet et redémarrage des services

apt-get install kubelet=1.30.0-1.1

systemctl daemon-reload

systemctl restart kubeletVérification de la version du control-plane depuis un poste admin

k get node

NAME STATUS ROLES AGE VERSION

controlplane Ready,SchedulingDisabled control-plane 62m v1.30.0

node01 Ready <none> 61m v1.29.0Remise en production du controle-plane

k uncordon controlplane

node/controlplane uncordoned

k get node

NAME STATUS ROLES AGE VERSION

controlplane Ready control-plane 62m v1.30.0

node01 Ready <none> 61m v1.29.0Drain du worker node

k drain node01 --ignore-daemonsets

node/node01 already cordoned

Warning: ignoring DaemonSet-managed Pods: kube-flannel/kube-flannel-ds-4gf6n, kube-system/kube-proxy-qcrfd

evicting pod kube-system/coredns-7db6d8ff4d-z7xp7

evicting pod default/blue-667bf6b9f9-nmsc2

evicting pod default/blue-667bf6b9f9-sl4fr

evicting pod default/blue-667bf6b9f9-nxcqv

evicting pod default/blue-667bf6b9f9-77hbh

evicting pod default/blue-667bf6b9f9-g2hmd

evicting pod kube-system/coredns-7db6d8ff4d-k9kdz

I0824 18:50:58.953556 33770 request.go:697] Waited for 1.001768588s due to client-side throttling, not priority and fairness, request: GET:https://controlplane:6443/api/v1/namespaces/kube-system/pods/coredns-7db6d8ff4d-z7xp7

I0824 18:51:08.954256 33770 request.go:697] Waited for 1.003116232s due to client-side throttling, not priority and fairness, request: GET:https://controlplane:6443/api/v1/namespaces/default/pods/blue-667bf6b9f9-nxcqv

pod/blue-667bf6b9f9-77hbh evicted

pod/blue-667bf6b9f9-nxcqv evicted

pod/blue-667bf6b9f9-nmsc2 evicted

pod/blue-667bf6b9f9-sl4fr evicted

pod/blue-667bf6b9f9-g2hmd evicted

pod/coredns-7db6d8ff4d-z7xp7 evicted

pod/coredns-7db6d8ff4d-k9kdz evicted

node/node01 drainedDepuis le worker node

Ajout du repository

vim /etc/apt/sources.list.d/kubernetes.list

deb [signed-by=/etc/apt/keyrings/kubernetes-apt-keyring.gpg] https://pkgs.k8s.io/core:/stable:/v1.30/deb/ /Update des packages

apt update

apt-cache madison kubeadmapt-get install kubeadm=1.30.0-1.1Upgrade du node

kubeadm upgrade node

[upgrade] Reading configuration from the cluster...

[upgrade] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[preflight] Running pre-flight checks

[preflight] Skipping prepull. Not a control plane node.

[upgrade] Skipping phase. Not a control plane node.

[upgrade] Backing up kubelet config file to /etc/kubernetes/tmp/kubeadm-kubelet-config634954427/config.yaml

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[upgrade] The configuration for this node was successfully updated!

[upgrade] Now you should go ahead and upgrade the kubelet package using your package manager.Upgrade du kubelet et redémarrage des services

apt-get install kubelet=1.30.0-1.1

systemctl daemon-reload

systemctl restart kubeletRetour sur le poste admin

Verification de la version

k get node

NAME STATUS ROLES AGE VERSION

controlplane Ready control-plane 69m v1.30.0

node01 NotReady,SchedulingDisabled <none> 68m v1.30.0Remise en production du node

k uncordon node01

node/node01 uncordoned

k get node

NAME STATUS ROLES AGE VERSION

controlplane Ready control-plane 69m v1.30.0

node01 Ready <none> 69m v1.30.0